Large-scale system-level digitalisation initiatives in the National Health Service in England: insights from three national evaluations

We provide an overview of the datasets in Table 1 and an overview of the findings in Table 2. Supplementary Table 1 provides illustrative quotes for each of the themes and sub-themes. It also maps TPOM codes, first-order concepts, second-order themes, and emerging aggregate dimensions.

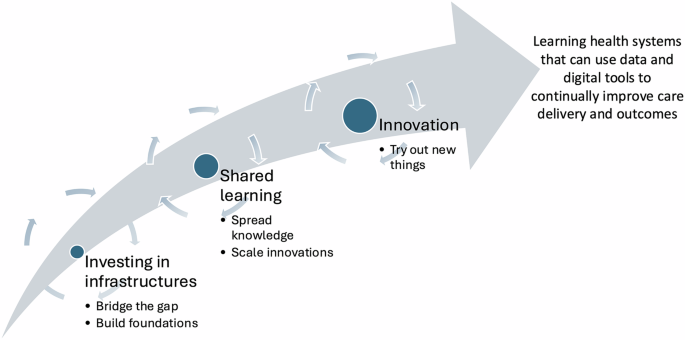

Each programme had a distinct focus, but all centred around an aspirational vision to orchestrate large-scale digitalisation through systemic intervention.

The NHS Care Records Service (NHS CRS), which was the backbone of the National Programme for IT (NPfIT), aimed to establish a foundational information infrastructure to promote interoperability across the health system. It included deploying centrally procured Electronic Health Record (EHR) infrastructures across all hospitals in England.

“The central vision of the Programme is the NHS Care Records Service, which is designed to replace local NHS computer systems with more modern integrated systems and make key elements of a patient’s clinical record available electronically throughout England (e.g. NHS number, date of birth, name and address, allergies, adverse drug reactions and major treatments) so that it can be shared by all those needing to use it in the patient’s care.” Department of Health21

The Global Digital Exemplar (GDE) Programme focused on implementing and optimising digital infrastructure (including EHRs and electronic prescribing systems), with an emphasis on generating learning that could be shared and scaled across the system. It was intended to achieve this by creating internationally recognised centres of excellence that would share their learning with hospitals seeking to achieve this status, thereby accelerating the implementation and adoption of EHRs.

“A Global Digital Exemplar is an internationally recognised NHS provider delivering improvements in the quality of care, through the world-class use of digital technologies and information. Exemplars will share their learning and experiences through the creation of blueprints to enable other trusts [hospitals] to follow in their footsteps as quickly and effectively as possible.” NHS England22

The third initiative, the NHS Artificial Intelligence Lab (AI Lab), was designed to stimulate the development and adoption of AI-based innovations within health and care. It invested in early- and later-stage development, implementation, and scaling of systems, and included efforts to develop national infrastructure and regulatory environments.

“The government has invested more than £2.3 billion into Artificial Intelligence across a range of initiatives since 2014. This portfolio of investment includes…£250 million to develop the NHS AI Lab at NHSX to accelerate the safe adoption of Artificial Intelligence in health and care.” National AI Strategy23

Our analysis revealed three aggregate dimensions reflecting the necessary investments in infrastructure, shared learning, and innovation for advancing the transformation of healthcare into a learning health system. These aggregate dimensions were derived from a set of second-order themes, which in turn were developed from first-order concepts generated through the coding process. For example, ‘investing in infrastructure’ was derived in recognition of the second-order theme of ‘infrastructural challenges’, which centred on the first-order concepts of ‘lack of forward planning’ and ‘basic information infrastructures and data quality’. The second-order themes structure the presentation of the findings in the remainder of this section and are presented as subheadings.

Infrastructural challenges

Despite their differing aims and structures, these programmes encountered similar infrastructural challenges. Infrastructural challenges spanned all TPOM dimensions, including technology (e.g., dependability, data quality), people (e.g., attitudes, engagement), organisations (e.g., leadership and management) and macro environmental factors (e.g., vision, political context).

Interoperability – the ability to exchange data across different systems – emerged as one of the most significant issues provider organisations faced in their digital transformation journeys. Interoperability challenges were often compounded by limited supplier cooperation and inconsistent adherence to standards. Even when systems were technically capable of exchanging data, meaningful, clinically useful data exchanges were not always straightforward, as care processes varied across settings and specialities, leading to duplication and potential safety risks.

“…appointments were put separately in the cardiology system and appointments were put separately in EPR [Electronic Patient Record]. And if you were lucky, they were the same. But obviously they weren’t some of the time… And it was just…data quality nightmare. Potentially unsafe. Non-clinical Digital Leader, GDE Evaluation

However, the programmes differed in their emphasis on interoperability. In NPfIT, interoperability was a central objective underpinning its ambitious vision. By contrast, in the GDE Programme and the AI Lab, interoperability remained important but was not a defining measure of success; these initiatives were more focused on specific technologies and local change. In these cases, interoperability tended to emerge as a by-product rather than being pursued as a primary goal.

Robust information infrastructures, including reliable Wi-Fi, functional EHRs, and high-quality data, are a prerequisite for the effective implementation of new systems. Yet these foundational elements were often overlooked or not prioritised in favour of more high-profile or cutting-edge technologies. This oversight was particularly exemplified in the AI Lab evaluation, where the performance of AI tools was highly dependent on the quality and readiness of local infrastructures, many of which were not fit for purpose.

“…something as simple as investing, yet more in the digital infrastructure…because there’s no way that we’re going to see a marked change in AI if actually they don’t have a digital infrastructure. Policy Maker, AI Lab Evaluation

The timing of each programme shaped how innovations and infrastructures were perceived. During NPfIT, EHRs were still relatively novel, particularly in hospitals, as foundational infrastructures. By the time of the GDE Programme, some hospitals were re-implementing these systems, and the focus had shifted to optimising and effectively using upgraded infrastructure. When the AI Lab was launched, EHRs had become commonplace, while AI was reaching the peak of its hype cycle. At this stage, EHRs were no longer viewed as novel technologies but as established components of the healthcare infrastructure. Once embedded, hospitals became increasingly reliant on these systems.

Development of an integrated information infrastructure calls for long-term vision and planned investment over decades. However, pursuing this vision was frustrated by a system that did not support long term planning. Key challenges included: annualised budgets, change funded through a succession of episodic programmes, strategic decision makers wanting to fund new programmes in preference to extending old programmes, leadership turnover and rapidly changing policy priorities. As a result, investments were inconsistent, leading to an incomplete, fragmented infrastructure that struggled to support efforts to promote data sharing and innovation. The underlying challenges did not change over time and continue to influence the delivery of ambitious change programmes in the health service to this day.

Although programmes faced some common technical challenges, most challenges were sociotechnical. We will discuss these in turn.

Evaluation and learning

Evaluation was an inductive theme that emerged alongside the TPOM dimensions. A common issue across programmes was the lack of good-quality baseline data. This made it difficult for programme managers and organisational stakeholders to assess outcomes, evidence progress and justify future investment.

“… what I understand is that the initial business case sort of promised a lot, but it didn’t lead into a set of creation of baseline metrics.” Manager, AI Lab Evaluation

Qualitative evidence of progress was much easier to obtain but difficult to measure and therefore received limited attention from high-level decision makers. For example, the GDE Programme provided strong evidence that learning can accelerate adoption and improve implementation outcomes. We observed two levels of learning. Firstly, learning within a programme that allowed local adaptation and then the application of that experience more generally. This was facilitated through cross-organisational networks involving similar technologies, populations, or professional groups. Secondly, there were some efforts to carry lessons and experience forward across multiple programmes.

“We spent, you know, a two-hour session understanding, with the right people in the room, what (their GDE partner) did… it’s taken them five years to develop it and we did it in, you know, in one year.” Clinical Digital Leader, GDE Evaluation

While learning emerged as one of the most valuable outcomes of all three programmes, it proved difficult to evaluate and measure. There was limited evidence of structured learning being captured and applied either within individual programmes or across different initiatives. In many cases, measurement efforts focused on what was most easily quantifiable.

“This whole thing around benefits realisation is really a bugbear of mine. Because if they want a good qualitative evaluation, then we need to do that separately rather than look at it from a milestone perspective and also give it time to embed to see whether it benefits people”. Clinical Digital Leader, GDE Evaluation

Across multiple programme evaluations, lessons identified were often strikingly similar, yet they were rarely applied in subsequent practice. As a result, there was a recurring loss of organisational memory, with programmes repeatedly facing the same challenges without learning from past efforts.

We also observed substantial variation across programmes in how evaluation and learning were approached. Although NPfIT included a multi-million-pound evaluation programme, little was done to integrate emerging evidence into delivery, and the programme’s public demise further limited lesson-sharing as attention shifted to cost recovery and blame. In contrast, the GDE Programme sought to learn from past shortcomings by introducing mechanisms such as funding gates, in which central support was conditional on demonstrated local progress, and by incorporating evaluation findings into ongoing strategy. The AI Lab commissioned a largely retrospective evaluation that yielded valuable lessons but offered limited opportunities for formative feedback during delivery.

Unstable governance, and changing objectives and components over time

This theme spanned all TPOM dimensions, including technology (e.g., usability), people (e.g., work processes), organisations (e.g., leadership and management) and macro environmental factors (e.g., economic considerations and incentives). The multi-agency nature of programmes (including NHS England, the Department of Health and Social Care, and in some instances newly established delivery bodies such as NHSX and NHS Connecting for Health) offered important benefits, not just in leveraging larger investments but also in strengthening the link between policy and delivery. However, it also meant that initiatives were adversely affected by unstable governance, competing priorities, values and methods of different sponsors. Ministerial turnover, periodic organisational restructuring of delivery bodies and other external factors such as the COVID-19 pandemic (in the GDE Programme and the AI Lab), further contributed to this instability.

“It’s a multiagency programme and by their very nature there’s always a little ambiguity in the governance, but our governance seems to have drifted in change with the changing responsibilities of different bodies during the lifetime of the Programme. And that’s not unusual but it has been particularly disruptive I think in this programme. Even changes at the top of the shop, changes of Secretary of State have an impact on our programme, because the ultimate goal or importance that the Programme is given, changes with that change of direction.” Engagement Lead, GDE Evaluation

Some flexibility in programme direction and delivery is desirable as well as necessary. However, balancing the need to remain aligned with overarching programme goals, while also retaining the flexibility to respond to evolving priorities and circumstances, presented a recurring challenge.

In this respect, the presence of central delivery bodies in both NPfIT and the GDE Programme helped provide a clearer sense of direction and a more integrated strategy. The AI Lab, by contrast, lacked such central coordination and progress was further hampered by the COVID-19 pandemic, which intensified these challenges. The pandemic had only a marginal impact on the GDE Programme, as most of its activities were already nearing completion.

Multiple stakeholders with conflicting agendas

The multiple stakeholders with conflicting agendas theme spanned all TPOM dimension,s including technology (e.g., adaptability/flexibility), people (e.g., engagement), organisations (e.g., leadership and management) and macro environmental factors (e.g., political context). Programmes involved multiple stakeholders with differing, and at times conflicting, agendas. They were frequently technology-led, with limited consideration of the socio-organisational aspects of change and the specific needs and priorities of the wider system. This imbalance created a risk of stakeholder disillusionment, particularly when the introduced technologies failed to meet expectations or to deliver perceived value, despite being promoted as solutions that would simplify and enhance clinical and operational workflows.

“I think the main problem with the Programme is the expectation that it’s a little bit like going round installing three thousand copies of Microsoft Word and the following day everybody has got a Word processor. It just is not that simple”. Healthcare Manager, NHS CRS Evaluation

The temporal context also shaped these developments. When NPfIT was launched, implementers had limited appreciation of the organisational transformation required alongside technological change. By the time of the GDE Programme, there was growing recognition that such dimensions were critical, and this understanding was reinforced and professionalised through parallel initiatives. Although this awareness remained when the AI Lab was established, the discourse became more technology-driven, as AI increasingly dominated the political and economic agenda.

There was further a sustained tension between national and local priorities which often complicated implementation and hindered sustained progress. While national-level buy-in and leadership were critical to initiating and resourcing digital programmes, successful adoption depended heavily on local engagement and ownership.

“As a [hospital] vision, they talk about figures, ease of communication and all the buzz words. They put no thought into the nitty gritty and how clinical teams will use it and so with regard to the long-term vision, I can see what they see is a lovely neat system where we are all using computers. That is a very superficial view. To run [system] properly and the success of [system] depends on the clinicians. They need to come down a few layers and get people working with the clinicians from day one”. Healthcare Professional, NHS CRS Evaluation

Some programmes were more effective than others in bridging this gap, particularly where strong links existed between local organisations and the centre to facilitate the exchange of information. In the GDE Programme, for example, newly created Engagement Lead roles played this function effectively. By contrast, central delivery bodies such as NHS Connecting for Health, which oversaw NPfIT, struggled to achieve the same effect.

The combination of strong central oversight and a lack of flexibility and engagement sometimes hindered local innovation. For example, while frameworks such as the Healthcare Information and Management Systems Society (HIMSS) Electronic Medical Record Adoption Model (EMRAM) offered a structured roadmap for achieving digital maturity in the GDE Programme, it was often experienced as generic and prescriptive, limiting the capacity of organisations to develop tailored solutions that met their unique needs.

“I think pursuit of HIMSS has hindered us in our development. I think it’s delayed some things that we wanted to do and could have done sooner because we’ve had to focus on HIMSS”. Clinical Digital Leader, GDE Programme

Inflated expectations

The inflated expectations theme spanned all TPOM dimensions, including technology (e.g., performance), people (e.g., attitudes and expectations), organisations (e.g., leadership and management) and macro environmental factors (e.g. political context). Programmes were shaped by inflated expectations, often driven by ambitious, politically motivated implementation timelines that prioritised rapid delivery. As a result, those charged with delivery struggled to manage the expectations of frontline staff, who anticipated that adoption would be smooth and would fairly rapidly deliver improvements.

“…the difficulty has been managing expectations. I think the end users feel they have been lulled into a false sense of security so when we get this system, it was going to be doing all this and that, we were going to get it soon, the implication was that it would be fairly easy to implement, and it hasn’t been”. IT Manager, GDE Evaluation

However, some programmes and settings encountered greater difficulties than others, particularly where technologies were perceived as externally imposed, required major infrastructural upgrades with long lead times before benefits could be realised, or offered limited usability for frontline staff. This was especially evident in NPfIT, where one EHR system was deployed while still under development and was widely criticised for disrupting established workflows. Notably, this system is only now being phased out of the NHS. By contrast, digital exemplar hospitals in the GDE Programme often had planned system implementations on their roadmap and invested significant effort in adapting them to local contexts and engaging clinical stakeholders to support adoption. Many of these systems had already proven successful in other settings, which further facilitated uptake.

Ministerial announcements of imminent progress generated intense pressure to demonstrate rapid improvements. This did not align with the complex and time-consuming nature of digital transformation, which typically unfolds over a period of years or even decades. Those delivering programmes spent significant time and resources seeking to evidence progress and held those delivering local change to account to strict implementation deadlines. Given that outcomes would typically emerge more slowly than programme lifetimes, they often sought to evaluate proxy measures (e.g. inputs rather than outcomes). However, these proxies might not accurately predict eventual desired outcomes.

“You know, they genuinely just want to measure something. They don’t really care what it is”. Policy Maker, AI Lab Evaluation

Overall, programmes were launched prematurely, with limited time for baseline assessments or thorough planning. They also ended too soon, before long-term benefits could be fully realised or demonstrated within the constrained political or funding cycles.

However, the technologies introduced across programmes varied, and so did their pathways to impact. EHRs implemented and optimised under NPfIT and the GDE Programme required long timelines before delivering measurable benefits, as they depended on large-scale organisational transformation. By contrast, many of the technologies supported by the AI Lab offered shorter routes to impact, often through automation and more targeted applications.

link